The novelty phase of generative AI is largely over for content teams. Most creative operations leads have moved past the initial excitement of generating a single high-quality image to the more difficult challenge of operationalization. The primary bottleneck is no longer the ability to generate a visual; it is the ability to generate five hundred visuals that all look like they belong to the same brand, same campaign, and same world.

Scaling visual production requires a shift from prompt-based luck to a structured workflow. For teams utilizing the Banana AI ecosystem, this transition involves a modular approach where specific models like Nano Banana and Nano Banana Pro are used for high-frequency assets, while specialized tools manage the refinement process.

Operationalizing these tools requires an understanding of where the technology currently sits in its maturity cycle. We are currently in an era of “assisted generation,” where human-led editors still provide the connective tissue between disparate AI outputs.

Defining the Production Stack: Nano Banana vs. Nano Banana Pro

Content teams often struggle with tool fatigue. Choosing between a dozen different models usually leads to a fragmented visual identity. The strategy used by many high-volume production houses is to designate a “core model” for the bulk of the stylistic work.

Nano Banana has emerged as a preferred tool for rapid ideation and social-first content because of its speed and efficiency. However, when teams move into high-fidelity territory—such as website headers, print-ready assets, or complex cinematic compositions—Nano Banana Pro becomes the primary engine. The “Pro” distinction here isn’t just a marketing label; it represents a higher threshold for spatial consistency and prompt adherence.

One of the first limitations teams encounter is the “style drift” that occurs when multiple designers use the same tool but different prompting logic. To operationalize this, teams are moving away from free-form prompting. Instead, they are building “style libraries” within the Nano Banana Pro environment. This involves locking in specific seeds and style descriptors that ensure the outputs from a designer in London match the outputs from a designer in New York.

The Canvas Workflow: Beyond the Prompt Box

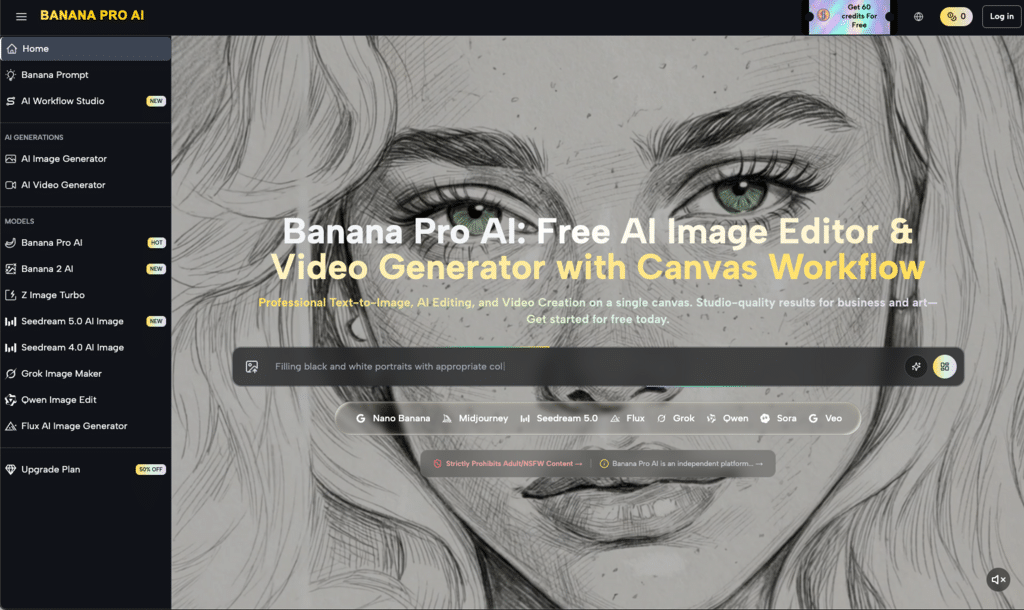

The traditional “text box and generate” model is insufficient for professional production. It lacks the granularity needed for iterative design. A more effective approach is the canvas-based workflow, which integrates the initial generation with immediate modification tools.

This is where the AI Image Editor becomes central to the team stack. Rather than discarding a “nearly perfect” image because a hand is distorted or a background element is distracting, teams can use localized editing to fix specific regions. This represents a significant shift in resource allocation. In a traditional workflow, a designer might spend two hours generating the “perfect” prompt. In an operationalized workflow, that same designer spends ten minutes generating a “good enough” base image and twenty minutes refining it through targeted AI tools.

Leveraging Image-to-Image for Brand Alignment

For many content teams, the biggest hurdle is brand consistency. You cannot simply tell a generic AI model to “make it look like our brand.” This is where the image-to-image capabilities of Banana Pro prove essential.

By using an existing brand asset—perhaps a photograph from a previous shoot or a 3D render of a product—as the structural reference, teams can use Nano Banana Pro to generate new environments around that asset. This keeps the physical proportions of the product or subject consistent while allowing for infinite variations in lighting, seasonal context, and background.

It is worth noting, however, that current AI models still face significant challenges with precise typography and complex spatial relationships between three or more distinct objects. Teams that expect the tool to handle 100% of the composition often find themselves frustrated. The most successful operators treat the output as a high-level “plate” that will likely require a final pass in a standard design suite for text overlays and precise brand colors.

Integrating Video into the Creator Pipeline

While static imagery remains the backbone of most digital strategies, the demand for short-form video has forced content teams to explore generative motion. The transition from a static image created in Nano Banana Pro to a moving asset requires a consistent visual thread.

The modular nature of the Banana AI platform allows teams to take their high-fidelity image outputs and pipe them directly into video generation models like Seedance 2.0. This prevents the “visual jump” that often occurs when teams use one tool for images and an entirely different ecosystem for video.

In a production environment, this looks like a unified pipeline:

- Ideation: Quick mocks in Nano Banana to test color palettes and lighting.

- Standardization: High-resolution generation using Nano Banana Pro for the final “keyframe.”

- Enhancement: Using the AI Image Editor to clean up artifacts and prepare the file for motion.

- Animation: Bringing the cleaned image into the video generator to create social-ready clips.

This workflow reduces the cognitive load on creators. They aren’t learning five different interfaces; they are learning one integrated system that handles different phases of the lifecycle.

Operationalizing Quality Control

When you increase the volume of production, you inevitably increase the volume of errors. Generative media is prone to subtle hallucinations—extra fingers, impossible shadows, or blurred textures—that can pass a quick glance but fail under professional scrutiny.

Content teams are now implementing “AI QC” stages in their workflows. This is a designated point in the process where a human editor reviews the batch outputs from Banana Pro. They look specifically for “AI-isms” that signal a lack of quality.

This is also a moment of limitation for the technology. There is currently no automated way to ensure that a generative tool hasn’t hallucinated a detail that contradicts brand safety or physical reality. Teams that ignore this step risk damaging their brand equity with “uncanny valley” content. The goal of using Nano Banana Pro is to achieve a professional aesthetic, but that aesthetic is only maintained through rigorous human oversight.

The Role of Versioning and Prompt Versioning

In a traditional software environment, versioning is standard. In creative AI, it is often overlooked. Teams are beginning to adopt “Prompt Versioning” as a way to maintain consistency over long-term projects.

Instead of a designer keeping a private list of successful prompts, these prompts are stored in a central repository, categorized by the model version (e.g., Nano Banana Pro v2 vs. v3). This ensures that if a model is updated or changed, the team can look back at the logic used for previous campaigns and adjust accordingly.

The Cost of Speed: Managing Computational Resources

Operationalizing generative tools also means managing the commercial side of creative work. High-fidelity models like Banana Pro require more credits or processing power than lighter models.

Smart teams categorize their needs:

- Low-Stakes Content: Internal memos, placeholder graphics, or temporary social stories are handled by Nano Banana.

- High-Stakes Content: Paid ads, website assets, and client-facing presentations are reserved for Nano Banana Pro.

This tiered approach ensures that teams aren’t wasting their most powerful resources on assets that don’t require high-level detail. It is a pragmatic way to scale without blowing the budget on premium generation credits.

Addressing the “Sameness” Problem

A common criticism of generative AI is that it creates a “look.” If every team uses the same base models, the internet starts to look remarkably uniform. Content teams avoid this by introducing “noise” or “style modifiers” that are unique to their brand.

Instead of relying on the default aesthetic of Banana Pro, teams often mix their own custom-shot photography with the AI generation. This “hybrid” approach—using AI to extend a real photograph rather than replace it—is currently the most effective way to maintain a unique brand voice while still gaining the efficiency of generative tools.

There is an inherent uncertainty in how these styles will evolve as models are continuously retrained. What works today in Nano Banana might look slightly different six months from now as the underlying weights are adjusted. Teams must remain agile, treating their prompt libraries as living documents rather than static manuals.

Training the Team: From Designers to Operators

The shift toward tools like Banana Pro requires a different skillset. We are seeing the emergence of the “Creative Technologist” or “AI Operator”—someone who understands design principles but also understands the latent space of a model.

Training a team to use the AI Image Editor effectively is about more than just clicking buttons; it’s about understanding composition and how AI interprets pixel data. A designer who knows how to “mask” and “inpaint” can achieve more in thirty minutes than a prompt-only user can achieve in three hours.

This evolution is not without friction. Many designers feel that generative tools diminish the “craft” of their work. However, when operationalized correctly, these tools actually remove the “drudgery” of design—the repetitive tasks like background removal, resizing, and lighting adjustments—allowing the team to focus on the high-level conceptual work.

Building a Repeatable Asset Pipeline

The ultimate goal of operationalizing Nano Banana Pro is to build a repeatable pipeline. This means a request can come in for a set of visual assets, and the team can follow a documented process to produce high-quality, on-brand results every time.

This pipeline typically follows this structure:

- Standardized Ingest: Defining the theme and style using the central prompt library.

- Batch Generation: Running the initial set of images through Nano Banana Pro.

- Collaborative Refinement: Using the AI Image Editor for localized fixes and brand-specific adjustments.

- Export and Archive: Tagging the final assets and the prompts used to create them for future reference.

By treating generative AI as a component of a larger system rather than a standalone miracle, content teams can finally achieve the scale that was promised at the start of the AI boom. The focus remains on consistency, quality control, and a deep understanding of the tool’s strengths and its inevitable limitations.

The future of content production isn’t about replacing the human creator; it’s about giving that creator a more powerful engine. With the right operational framework, tools like Nano Banana Pro become less about “making images” and more about “building worlds.”