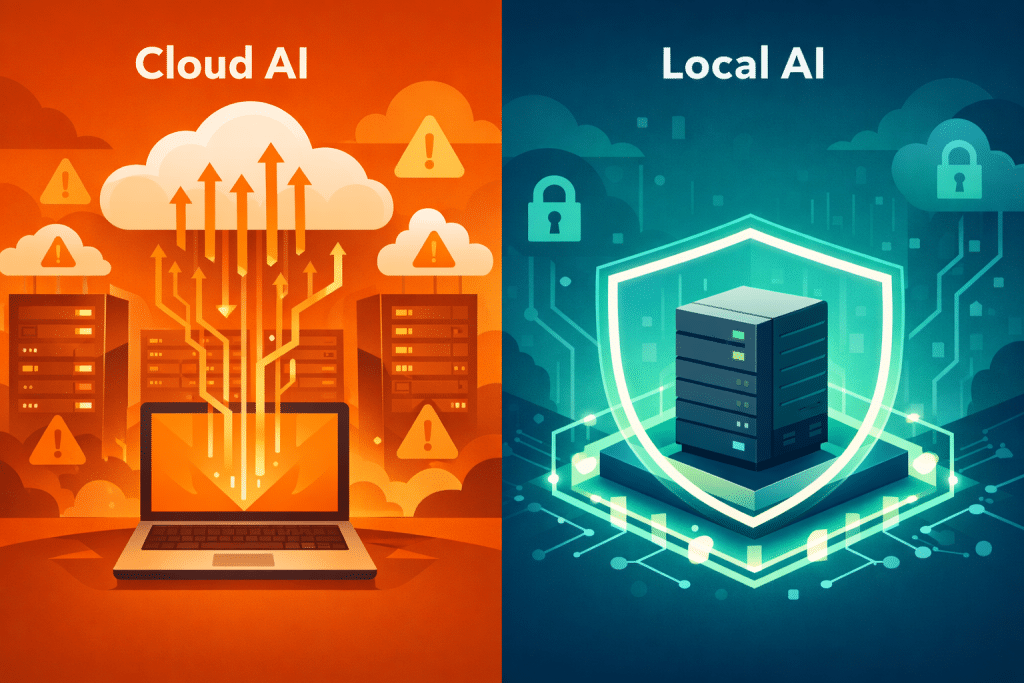

The rise of agentic AI has given businesses an unprecedented ability to automate complex workflows — from customer support triage to code deployment pipelines. But there is a hidden cost that most companies overlook: every time an AI agent processes your data, it often travels through third-party cloud servers you do not control.

For entrepreneurs building companies on proprietary data, trade secrets, and customer trust, this is not just a technical concern. It is a strategic vulnerability. The solution gaining momentum in 2026 is a concept called local-first AI agents — autonomous AI systems that run entirely on infrastructure you own.

This article is for business leaders, startup founders, and technical decision-makers evaluating how to deploy AI automation without compromising data sovereignty.

What Are Local-First AI Agents?

Unlike conventional AI tools that depend on cloud APIs, local-first AI agents operate on your own hardware — a Mac, a Linux server, a NAS device, or even a Raspberry Pi. They process data, execute tasks, and store memory locally, meaning sensitive information never leaves your network.

The concept is not entirely new. Self-hosted software has existed for decades. What is new is the combination of persistent autonomy and local execution. These agents do not just respond to prompts — they run scheduled jobs, react to events, maintain long-term memory, and take real actions across platforms like Slack, GitHub, and Telegram.

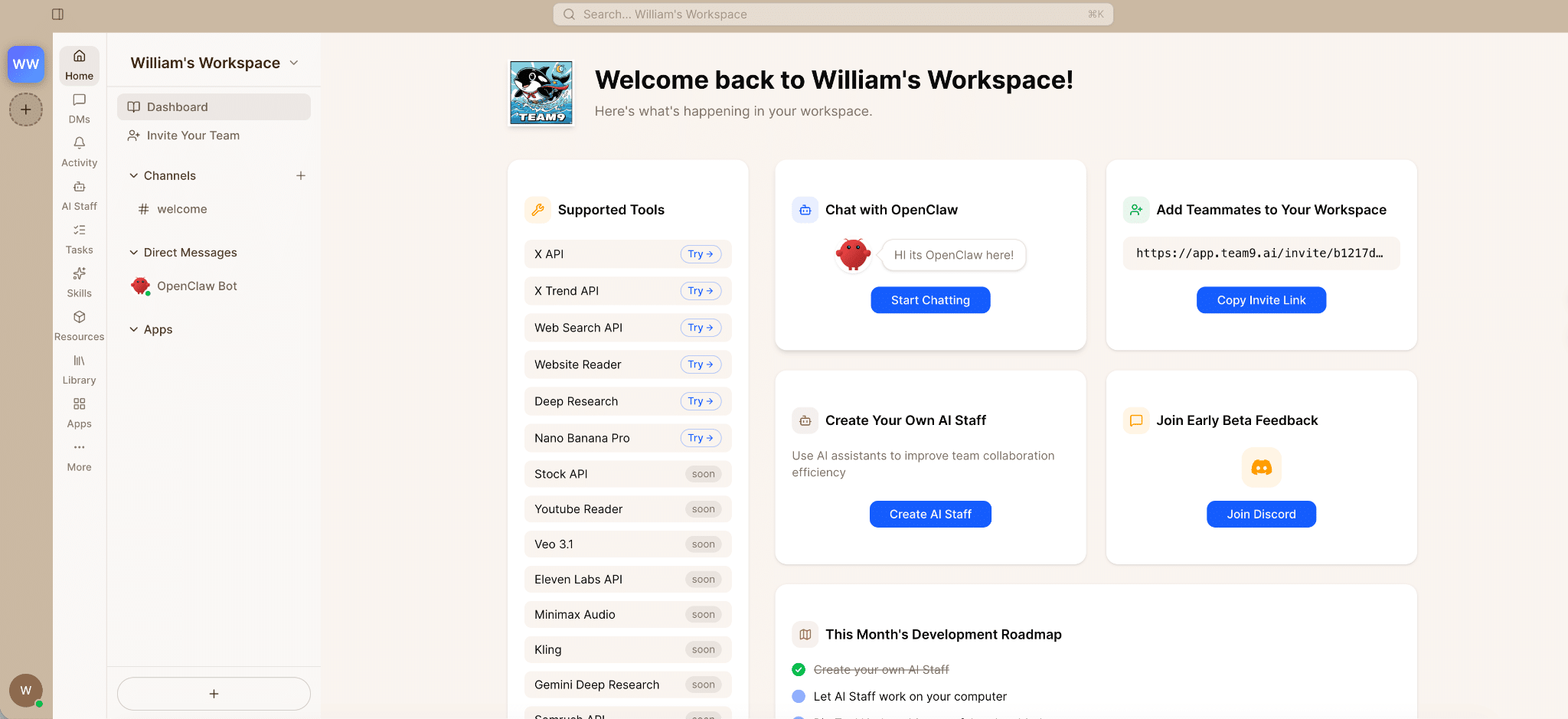

OpenClaw, an open-source agent runtime, exemplifies this approach. It provides a complete execution environment with cron-based scheduling, editable Markdown memory files, and built-in safety rails — all running on hardware the user controls. According to a 2025 Cisco report, 92% of organizations cite data privacy as a top concern when adopting AI tools, making this local-first model increasingly relevant.

Why Data Sovereignty Matters More Than Ever

Three forces are driving the urgency behind data-sovereign AI:

1. Regulatory pressure is intensifying. The EU AI Act, GDPR enforcement actions, and emerging regulations in Asia-Pacific now impose strict requirements on where and how AI processes personal data. A McKinsey 2025 survey found that 67% of enterprises had to modify their AI deployments to comply with new data residency laws.

2. Supply chain attacks are targeting AI pipelines. In 2025 alone, multiple incidents exposed how cloud-hosted AI tools became vectors for credential theft and data exfiltration. When your AI agent runs locally, the attack surface shrinks dramatically.

3. Competitive intelligence leaks are real. Every query you send to a cloud-based AI could theoretically be logged, analyzed, or used as training data. For companies in competitive markets — fintech, biotech, legal tech — this risk is unacceptable.

How Businesses Are Deploying Local-First AI Today

The practical applications are already diverse:

– Automated monitoring and alerting: Agents that watch server logs, social media mentions, or competitor pricing — all without exposing your monitoring criteria to external services.

– Internal knowledge management: AI that indexes your company wiki, Slack history, and documents, providing instant answers without sending proprietary content to third-party APIs.

– Development workflow automation: Agents that manage GitHub issues, run code reviews, and deploy updates, keeping your source code on-premise.

Platforms like Team9 AI Workspace are simplifying this deployment by providing plug-and-play setups that eliminate the complexity of manual configuration. Instead of spending hours on Node.js environments and security hardening, teams get production-ready agent infrastructure with sensible defaults in minutes.

Key Takeaways

– Local-first AI agents run on your own hardware, keeping sensitive data within your control

– 92% of organizations rank data privacy as a top AI adoption concern (Cisco, 2025)

– Regulatory compliance, supply chain security, and competitive intelligence protection are the three key drivers of this shift

– Open-source runtimes make self-hosted AI agents accessible to teams of all sizes

– Plug-and-play deployment platforms reduce setup time from hours to minutes

What to Consider Before Making the Switch

Before adopting local-first AI agents, evaluate these factors:

| Factor | Cloud AI | Local-First AI |

|---|---|---|

| Data privacy | Shared infrastructure | Full control |

| Setup complexity | Instant (SaaS) | Moderate to low (with managed platforms) |

| Ongoing cost | Per-API-call pricing | One-time hardware + energy |

| Customization | Limited by provider | Fully customizable |

| Compliance | Depends on vendor | You control the audit trail |

The trade-off is clear: cloud AI offers convenience, while local-first AI offers control. For businesses where data is the product — and trust is the brand — the choice is becoming obvious.

Conclusion

The agentic AI revolution does not require you to hand over your most sensitive data to third parties. Local-first AI agents offer a path to full automation with full sovereignty. As regulations tighten and data breaches grow more costly, businesses that control their own AI infrastructure will hold a decisive strategic advantage.

The question is no longer whether to automate — it is whether you can afford to automate on someone else’s terms.