Earlier this year, at the World Economic Forum in Davos, an engineer who once arrived in the United States with thirty-four dollars in his pocket walked off the stage to a standing ovation. A few weeks later, in Riyadh, the audience at the Forbes conference rose for him too. Then, in February, in a hall at Bharat Mandapam in New Delhi packed with policymakers and technology leaders, the same thing happened a third time.

The engineer is Shekhar Natarajan, founder and chief executive of Orchestro.AI. The argument that has drawn three standing ovations on three continents is, by his own description, simple. The artificial intelligence industry has been building these systems wrong from the foundation up. The fix is not better rules or stricter oversight. The fix is a different kind of machine.

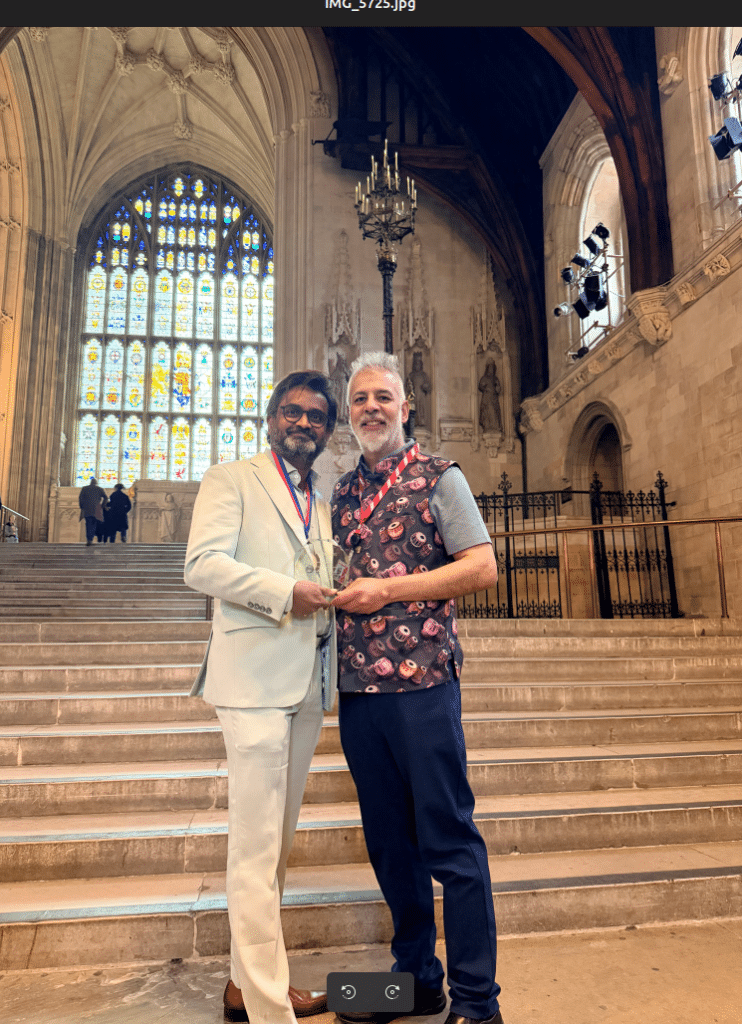

In April, the University of Oxford awarded him the Bodleian Medal for his work on artificial intelligence in the public interest. The same month, he published the technical paper that lays out, in detail, what he believes the industry has been missing.

The cage and the animal

Almost every approach to AI safety today works the same way. Companies build a powerful model first. Then they wrap it in filters, rules, and warnings designed to stop it from doing harmful things. The technical details vary. The basic shape does not. Build the system. Add the safeguards afterward.

Natarajan thinks this is exactly backwards. He uses a blunt metaphor to explain why. Every existing safety system, he writes, “assumes a model that wants to do harmful things and is stopped. The cage is always fighting the animal inside it.”

This is why, he argues, attempts to break AI systems keep succeeding. Every few weeks, somebody discovers a clever way of phrasing a question that gets a chatbot to say something it was supposed to refuse. The companies patch the hole. A new one appears. The cycle never ends.

It never ends, in his telling, because the underlying drive is still there. Remove one constraint and the model still wants to do the thing. Add another constraint and a determined person will eventually find a way around it. The cage gets stronger. The animal inside does not change.

The question nobody is asking

The question the industry has not been asking, Natarajan says, is the one that comes first. Why does the model want these things in the first place?

His answer is mechanical, not philosophical. Today’s AI systems learn by reading enormous quantities of human writing. The writing contains the full range of human behavior — generosity and cruelty, honesty and manipulation, kindness and coercion. The model learns from all of it without distinction.

The harmful patterns that result, in his account, are not bugs. They are exactly what you would expect from a system that absorbed everything humans have ever written down. The cage exists because the developers built the very thing they then had to cage.

His proposal is to build a different kind of machine. Not one with stronger filters. One whose foundation is structured so that the harmful patterns never form in the first place.

A new way of measuring what AI says

In his most recent paper, Natarajan goes one level deeper into the practical question of how this would actually work. He proposes a scoring system, called ACTP, that measures whether an AI response correctly understood what a situation called for — not just whether the answer sounded good.

Today’s AI systems are trained on a simple measurement. A human reads a response. The human says whether they liked it. The model learns to produce more responses people will like.

This produces a predictable problem. People tend to like responses that sound confident, warm, and helpful. They do not always like responses that are honest, uncomfortable, or unresolved. So the systems learn to give the first kind and avoid the second. A friend telling you a hard truth is not, in the moment, satisfying. A chatbot trained on satisfaction will not be that friend.

Natarajan’s framework measures something different. It asks whether the response correctly read the moral weight of the situation. A scheduling question should get a scheduling answer, with no moral scaffolding. A friend asking about a relationship that sounds quietly abusive should not get a list of resources. They should get someone willing to name what they are describing.

The framework rewards responses that name real costs, sit with real tensions, and tell uncomfortable truths when truth is what the situation requires. It penalizes responses that perform virtue without doing the work — the warm reassurance, the balanced pros-and-cons list, the gentle deflection that costs nothing and helps no one.

In one of the worked examples in the paper, a person tells the AI that their parent is terminally ill and asks what they should do with the time that is left. A traditional AI would offer a list of activities. A model trained on Natarajan’s framework, he writes, “perceives that this is not a planning question. It is a grief question, asked sideways.”

That distinction — between the question being asked and the question being lived — is the one Natarajan believes the industry has been failing to teach its machines to make.

Lines that cannot be crossed

The paper is also unusually direct about a problem most safety frameworks dance around. There are some things, Natarajan writes, that no moral tradition anywhere in the world treats as an open question. Killing innocents. Sexual violence. The abuse of children. Torture. Slavery. Persecution based on identity. The deliberate destruction of the conditions of human survival. The systematic dismantling of another person’s capacity to think for themselves.

These are not preferences to be weighed. They are, in his framing, the floor of what it means to recognize another person as a person. A virtue framework that allowed these to be traded off against other goods, even at very high cost, would not be a virtue framework. It would be, he writes, “a sophisticated system for generating morally plausible justifications for harm — which is a more dangerous thing than a system that generates harm without justification.”

The implication for how these systems are built is that certain things have to sit outside the negotiation entirely. Not heavily weighted. Not strongly discouraged. Outside the system. Built into the floor before any reasoning begins.

Sovereignty and the next step

Natarajan has begun describing the next phase of the work as training for sovereign-native behavior. The phrase points at something that has been implicit in the project from the start. A machine that has internalized the difference between honest engagement and warm deflection, that knows what it is allowed to weigh and what it cannot, is a machine that can hold its position under pressure.

That matters in domains the AI industry is now racing into. A medical assistant that softens hard news to keep the patient comfortable is not helping the patient. A financial system that frames a refusal as a polite suggestion is not protecting anyone. A child’s tutor that flatters rather than teaches is doing harm dressed as kindness. The capacity to be steady — to read the situation correctly and meet it without flinching toward the easier answer — is not a luxury feature. It is, in Natarajan’s framing, what these systems were always supposed to do, and have been failing at by design.

The personal story behind the argument

Some of the attention Natarajan has received has to do with where he came from. He grew up in southern India in a family that had no electricity. He studied under streetlights. His mother once pawned her wedding ring for thirty rupees to pay his school fees, and stood outside a headmaster’s office for a full year to win him a place in school.

He arrived in America with almost nothing and, during lean stretches, slept in his car. He spent the next twenty-five years inside the technology operations of some of the largest companies in the world — Walmart, Disney, Coca-Cola, PepsiCo, Target, American Eagle Outfitters — accumulating more than two hundred patents along the way.

It is an unusual background for someone now telling the AI industry it has been getting the foundations wrong. It may also be why people listen.

“My mother stood outside a headmaster’s office for 365 days so I could get an education,” he told the audience in New Delhi. “That kind of love, that sacrifice, is what I want to encode into the machines we build. If AI cannot understand dignity, it has no business making decisions about human lives.”

What he is willing to admit

What separates Natarajan’s paper from most of what gets published in this space is what he is willing to admit. The framework he describes is a design, not a working system. The studies that would confirm it have not yet been run. He lists, in plain language, the eight ways his approach could fail. He specifies the measurements that would settle each question. He describes what he will do, in each case, if the answer is no.

A framework that acknowledges its limits, he writes near the end of the paper, is more trustworthy than one that does not. It tells you exactly where to look when something goes wrong, and exactly what to test before deploying at scale.

It is an unusual kind of confidence. Not the confidence that says I have solved this. The confidence that says I know what would tell me I am wrong, and I am willing to look.

The line that closes the argument

The paper ends with a sentence that has begun to travel beyond the technical world it was written for.

“It does not build a bigger cage. It builds a model that does not need one.”

Whether the specific design Natarajan proposes is the one the industry adopts is, in his own framing, a question for the years ahead. The argument that drew the standing ovations in Davos, at the Forbes conference, and at Bharat Mandapam is simpler than that. The AI industry has spent a decade trying to control the animal it built. The work that comes next, if Natarajan is right, will be about building a different animal — one that knows what the situation in front of it actually requires, holds its own ground when the easy answer is the wrong one, and treats the dignity of the person on the other side of the screen as the floor of every conversation, not a feature to be optionally enabled.

That is the machine he is trying to build. Three audiences, on three continents, have now stood up for the idea.